70: GANsformer2

Compositional Transformers for Scene Generation by Drew A. Hudson and C. Lawrence Zitnik explained in 5 minutes

⭐️Paper difficulty: 🌕🌕🌕🌕🌑

🎯 At a glance:

There have been several attempts to mix together transformers and GANs over the last year or so. One of the most impressive approaches has to be the GANsformer, featuring a novel duplex attention mechanism to deal with the high memory requirements typically imposed by image transformers. Just six months after releasing the original model, the authors deliver a solid follow-up that builds on the ideas for transformer-powered compositional scene generation introduced in the original paper, considerably improving the image quality and enabling explicit control over the styles and locations of objects in the composed scene.

⌛️ Prerequisites:

(Highly recommended reading to understand the core contributions of this paper):

1) GANsformer

🚀 Motivation:

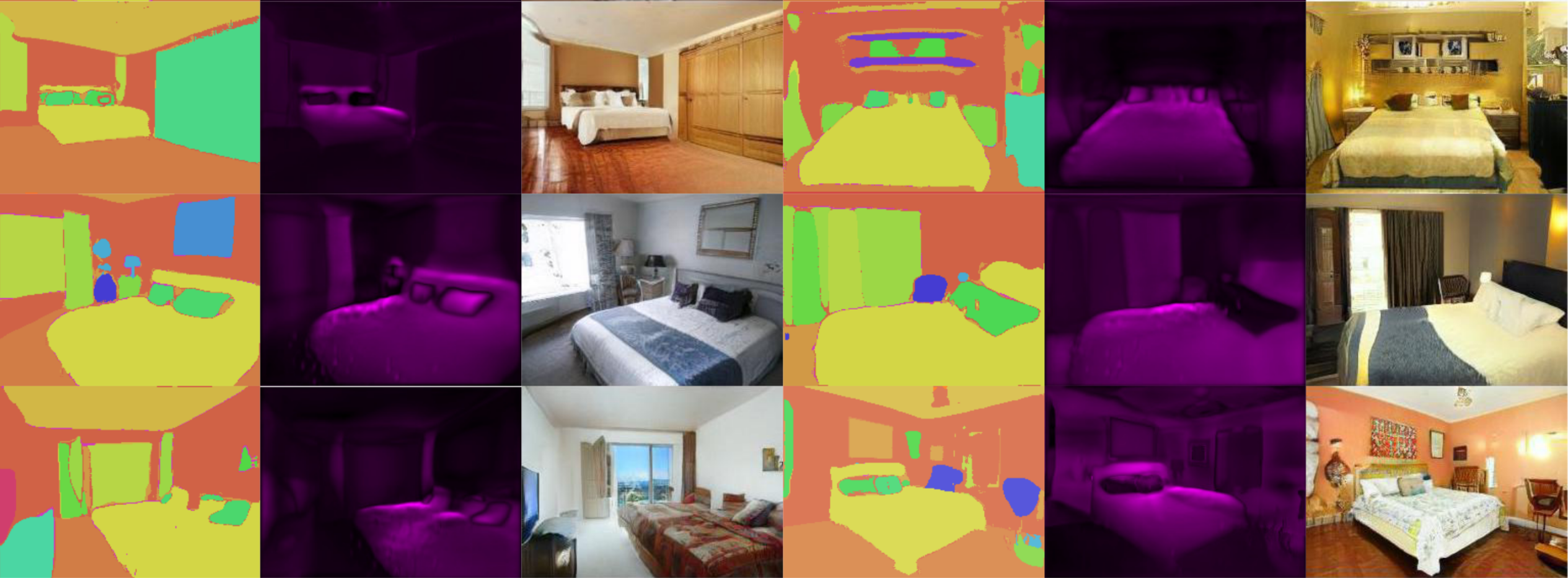

The authors pitch their idea as a rough approximation of the way humans draw: starting with a sketched outline of the scene more and more details are filled in iteratively to compose a complex scene at the end of the process. While modern GANs are able to produce phot-realistic faces, they lack the compositionality, interpretability, and control required to synthesize complex real-world scenes with many small details. To overcome these weaknesses, the authors split the image generation process into two distinct stages: planning and execution. The goal of the planning stage is to produce a rough semantic layout of the generated image from a set of sampled latent variables. The execution stage, on the other hand, focuses on translating the layout into the final image with the content and style of segments guided by their corresponding latents. Both stages use a GANsformer model.

The new object-oriented pipeline improves the original coarse-to-fine design and leaves conditional models based on static layouts such as Pix2Pix, and SPADE even further behind in terms of the quality and complexity of the generated images. Moreover, unlike SPADE and Pix2Pix, the proposed GANsformer2 is able to generate its layouts from scratch.

🔍 Main Ideas:

1) GANsformer:

GANsformer is a transformer-based model that translates a set of latent variables into an image. It starts with a 4x4 grid that trades information with the set of latents back and forth via bipartite attention to iteratively build an image through a series of upsampling and convolution layers. The bipartite attention computes attention from the image to the latents and then back from the latents to the image pixels. This back and forth has the effect of aggregating information from semantic regions in the image space to a small number of latents that work at the object level and meaningfully control the style and content of whole regions in an image instead of disparate pixels.

2) Planning Stage - Layout Synthesis:

The goal of this stage is to generate a layout comprised of several components with each having a shape, position, semantic category, and a predicted depth ordering. Interestingly, no supervision is given to the model on the depth predictions.

A lightweight GANsformer is recursively applied to construct the layout. At each step, the layout segments are generated by the GANsformer from a varying number of sampled latents (structure latents) that guide their segments. The GANsformer uses both the current layout as well as the previous layout concatenated along the channel dimension in the query of the bipartite attention so that the model is explicitly conditioned on the layouts from earlier steps.

A favorable side effect of this recurrent approach is that it enables object manipulation. It is possible to change one or more of the structural latents and generate the “next” layout with the current layout used as the “previous” condition.

The layout segments are overlaid according to their predicted shapes and depths and passed through a softmax function to obtain a distribution over all segments for each pixel. This is useful for modeling occlusions.

Note that since the generated layouts are likely soft noisy, whereas the real layouts are discrete, a hierarchical noise map is added to the layouts fed to the discriminator to ensure that its task remains challenging during training.

3) Execution stage: Layout-to-Image Translation:

Let’s remind ourselves that the goal of this stage is to generate a realistic image from the layout obtained in the planning stage.

First, the mean depth and label of each segment are concatenated to its latents, which are mapped with an MLP into the Style Latents.

The actual image is generated with another GANsformer that uses the precomputed layout in the first half of bipartite attention (latents attending to pixels) instead of predicting it on the fly and modifies the synthesized image with the other half of bipartite attention (pixels attending to latents). There are, additionally, learnable sigmoid gates that control the modulation strength of each latent-to-segment distribution and allow the model to refine the layouts at the execution stage.

4) Semantic Matching and Segment-Fidelity Losses:

Instead of using perceptual and feature-matching losses, a pretrained U-Net is used for computing cross-entropy of the predicted and source layouts. The intuition is that the other two losses are too severe at the start of training and hinder the diversity of generated scenes.

The discriminator jointly processes the image along with its source layout, and then partitions the downsampled image according to the segments in the layout and assesses each segment separately to promote semantic alignment between the layout and the image.

📈 Experiment insights / Key takeaways:

- Unconditional baselines: plain GAN, StyleGAN2, SA-GAN, VQGAN, k-GAN, SBGAN, GANformer

- Conditional approaches: SBGAN, BicycleGAN, Pix2PixHD, SPADE

- 256x256, for 10 days on a single V100 GPU

- GANsformer2 beats all other baselines on all datasets (CLEVR, Bedrooms, FFHQ, COCO, COCOp, Cityscapes) in terms of almost all metrics (FID, Precision, Recall)

- GANsformer2 has great diversity and semantic consistency as measured by measuring pairwise LPIPS between various generated images for a single layout and measuring IoU between segmentations of generated images and their target layouts.

- GANsformer2 learns a good depth model in an entirely unsupervised manner

- It is possible to manipulate the color, textures, shape, and position of objects in an image, or even add and remove objects in the scene

🖼️ Paper Poster:

🛠 Possible Improvements:

- Make the model work in 512x512 or even 1024x1024

- Decrease reliance on training layouts via unsupervised object discovery and scene understanding

- Improve disentanglement and controllability of the latent space

✏️My Notes:

-

(3.5/5) for the name. I am conflicted on this one. On the one hand, GANsformer is still a great name, on the other hand, GANsformer2 is a somewhat lazy way to name a model.

- GANsformer was one of my favorite papers from this year, and I am happy to see a new paper out so soon, though unfortunately, no pretrained models or code are available at the time of writing

- Sucks that it still only works for 256x256 :(

- OMG, GANsformer2 + CLIP sounds like a ton of fun for composing complex scenes with text. I can see real-world applications in interior design and stock landscape photos.

-

It looks like some ideas from GANsformer2 can be combined with a NeRF based generator such as GIRAFFE for novel view rendering and 3d-scene composition and editing.

- The paper is actually rather dense. I wouldn’t mind a couple more figures detailing each step of the pipeline

- What do you think about GANsformer2? Share your thoughts in the comments!

🔗 Links:

GANsformer2 arxiv / GANsformer2 github / GANsformer2 Demo - ?

🔥 Check Out These Popular AI Paper Summaries:

- SOTA pretraining with Masked Auto-Encoders - MAE explained

- AdaConv explained - the best artistic style transfer model

- How to train GANs really fast - ProjectedGAN explained

👋 Thanks for reading!

Join Patreon for Exclusive Perks!

If you found this paper digest useful, subscribe and share the post with your friends and colleagues to support Casual GAN Papers!

Join the Casual GAN Papers telegram channel to stay up to date with new AI Papers!

Discuss the paper

By: @casual_gan

P.S. Send me paper suggestions for future posts @KirillDemochkin!